original scene

Abstract

Virtual environments (VEs) are pivotal for virtual, augmented, and mixed reality systems. Despite advances in 3D generation and reconstruction, the direct creation of 3D objects within an established 3D scene (represented as NeRF) for novel VE creation remains a relatively unexplored domain. This process is complex, requiring not only the generation of high-quality 3D objects but also their seamless integration into the existing scene. To this end, we propose a novel pipeline featuring an intuitive interface, dubbed GO-NeRF. Our approach takes text prompts and user-specified regions as inputs and leverages the scene context to generate 3D objects within the scene. We employ a compositional rendering formulation that effectively integrates the generated 3D objects into the scene, utilizing optimized 3D-aware opacity maps to avoid unintended modifications to the original scene. Furthermore, we develop tailored optimization objectives and training strategies to enhance the model’s ability to capture scene context and mitigate artifacts, such as floaters, that may occur while optimizing 3D objects within the scene. Extensive experiments conducted on both forward-facing and 360^o scenes demonstrate the superior performance of our proposed method in generating objects that harmonize with surrounding scenes and synthesizing high-quality novel view images. We are committed to making our code publicly available.

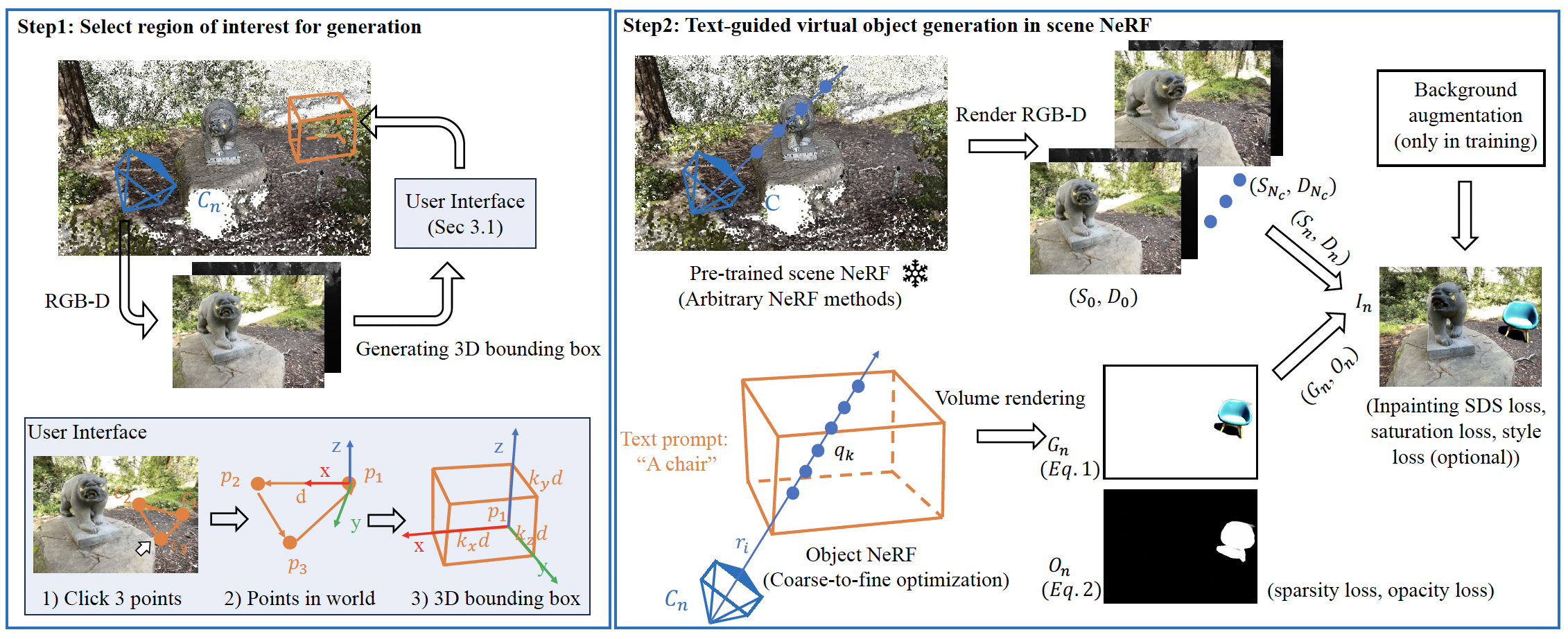

Pipeline

Left: a user-friendly interface where the ROI for generation can be specified by clicking three points on the image plane. Right: a compositional rendering pipeline that seamlessly composites generated 3D objects into the scene neural radiance field.

Same scene with different text prompts

"basket of fruits"

"a pumpkin"

"A pile of messy clothes"

"A wooden box"

"a lego house"

original scene

"a spider on the ground"

"A gray hedgehog lying on the ground"

original scene

"a stone"

"a stone chair"

original scene

"a boat"

"an old boat"

Same text prompt with different scene styles

"a cat"

"a cat"

Generate multiple objects within the scene

"Pikachu + Traffic cone + Backpack"

"Bird + Chair"

Stereoscopic Videos

In VR headsets, use the web browser to enter this webpage, you need to choose one of the following cases. After this, you can enjoy the 3D video.